Results 7,311 to 7,320 of 12094

Thread: Anandtech News

-

09-01-17, 01:30 PM #7311

Anandtech: Logitechs G613 "Lightspeed" Wireless Mechanical Keyboard Cuts Wires & Inp

Logitech this week introduced its new wire mechanical keyboard aimed at gamers, touting its low input lag for a wireless keyboard as a defining feature. While the Logitech G613 was designed for gamers and has a number of gaming-oriented features, it is not overloaded with them and it can connect to two host systems using different wireless technologies, which makes it suitable for business environments as well.

Mechanical keyboards for gamers and wireless keyboards have existed for ages, but well-known manufacturers of keyboards have restrained themselves from wedding “gaming” and “wireless” for multiple reasons; the relatively high input lag being the primary one. Logitech’s Lightspeed platform promises to cut the input lag by optimizing internal architecture of keyboards/mice, decreasing polling rate of wireless receivers to 1 ms, increasing signal strength, applying a proprietary frequency agility mechanism to avoid interference and optimizing drivers. The Lightspeed-enabled input devices use special receivers, which are different from Logitech’s popular Unifying receivers.

The Logitech G613 mechanical wireless keyboard uses the company’s Romer-G switches featuring 1.5 mm actuation distance and rated for 70 million key presses. The device is equipped with six programmable keys, media playback control keys and can connect to hosts using the Lightspeed or Bluetooth radio technologies. Each G613 keyboard comes with a bundled Lightspeed receiver, which requires a USB Type-A port as well as Windows 7 or later, Mac OS X 10.8 or later, ChromeOS, or Android 3.2 or later to work.

The Logitech G613 looks rather minimalistic, it has no programmable RGB LED lighting and resembles advanced office keyboards with a palm rest, so apart from gamers it can address other demanding users as well. One of the key features that Logitech advertises about its G613 is its battery life: it can operate on two AA batteries for up to 18 months, which will be appreciated by people interested in not only gaming performance, but overall comfort in general.

Logitech says that the G613 mechanical wireless keyboard will be available shortly for $149.99 in the U.S. Prices in other countries may vary.

Gallery: Logitech G613 Wireless Mechanical Keyboard

Related Reading:

- Capsule Review: Logitech MK850 Performance Wireless Keyboard and Mouse Combo

- The Logitech G910 Orion Spectrum Mechanical Keyboard Review

- Logitech Announces G610 Orion Brown And G610 Orion Red Mechanical Keyboards

- Corsair Launches Splash-Resistant K68 Mechanical Keyboard

- Nanoxia Ncore Retro: Mechanical, Water Resistant, 'Warehouse 13' Style Keyboard

- The Das Keyboard 'Prime 13' & '4 Professional' Mechanical Keyboard Review

- The Cherry MX Board 6.0 Mechanical Keyboard Review

More...

-

09-01-17, 02:16 PM #7312

Anandtech: Philips Demos 328P8K: 8K UHD LCD with Webcam, Docking, Coming in 2018

TPV Technology is demonstrating a preliminary version of its upcoming 8K ultra-high-definition display at IFA trade show in Germany. The Philips 328P8K monitor will be a part of the company’s professional lineup and will hit the market sometimes next year.

Philips is the second mass-market brand to announce an 8K monitor after Dell, which has been selling its UltraSharp UP3218K for about half of a year now. The primary target audiences for the 328P8K and the UP3218K are designers, engineers, photographers and other professionals looking for maximum resolution and accurate colors. Essentially, Dell's 8K LCD is going to get a rival supporting the same resolution.

At present, TPV reveals only basic specifications of its Philips 328P8K display — 31.5” IPS panel with a 7680x4320 resolution, a 400 nits brightness (which it calls HDR 400) and presumably a 60 Hz refresh rate. When it comes to color spaces, TPV confirms that the 328P8K supports 100% of the AdobeRGB, which emphasizes that the company positions the product primarily for graphics professionals. When it comes to connectivity, everything seems to be similar to Dell’s 8K monitor: the Philips 8K display will use two DP 1.3 cables in order to avoid using DP 1.4 with Display Stream Compression 1.2 and ensure a flawless and accurate image quality.

It is noteworthy that the final version of the 328P8K will be equipped with a webcam (something the current model lacks), two 3W speakers as well as USB-A and at least one USB-C port “allowing USB-C docking and simultaneous notebook charging”. In order to support USB-C docking with this 8K monitor, the laptop has to support DP 1.4 alternate mode over USB-C and at present, this tech is not supported by shipping PCs. In the meantime, since in the future USB-C may be used a display output more widely, the USB-C input in the Philips 328P8K seems like a valuable future-proof feature (assuming, of course, it fully supports DP 1.4 alt mode over USB-C).

Gallery: Philips Demos 328P8K: 8K UHD LCD with Webcam, Docking, Coming in 2018Preliminary Specifications

Philips 328P8K 32 Ultra HD 8KPanel 31.5" IPS Resolution 7680 × 4320 Brightness 400 cd/m² Contrast Ratio 1300:1 (?) Refresh Rate 60 Hz Viewing Angles 178°/178° horizontal/vertical Color Saturation 100% Adobe RGB

100% sRGBDisplay Colors 1.07 billion (?) Inputs 2 × DisplayPort 1.3 Audio 2 × 3W speakers USB Hub USB-A and USB-C ports

Philips does not disclose whose panel it uses for the monitor, but given that the specs of the Philips 328P8K are similar to those of the UP3218K, it is highly likely that both models use the same panel from LG Display (with whom TPV has a joint venture in China). Meanwhile, Dell’s UP3218K ended up supporting 98% of the DCI-P3 color gamut (in addition to 100% of the AdobeRGB and 100% of the sRGB color spaces), hence, if the panels are the same, the Philips 328P8K may well support DCI-P3 as well. In fact, the company has published a marketing rendering of the 328P8K that displays the Adobe Photoshop CC working under macOS. Apple has been gradually transiting its own devices to P3-supporting displays for some time now and therefore offering Apple customers a non-P3 monitor in 2018 does not seem like a bright idea. So I'd be surprised if we don't see DCI support in the final version.

TPV intends to ship its Philips 328P8K sometimes in Q1 or Q2 next year, but the company has not made any decisions regarding the final timeframe. High-end products require a lot of tweaking, so do not expect TPV to rush the 8K monitor to the market. As for pricing of the Philips 328P8K, it is hard to make guesses without knowing market situation, availability of the panels and competition. For example, Dell has cut the price of its UltraSharp UP3218K by 22% since the launch in late March to $3,899 despite the lack of any rivals. In any case, since the Philips 328P8K is aimed primarily at professionals, do not expect it to be affordable from a consumer point of view.

Related Reading:

- Cosemi Announces 328-Feet ‘8K-Ready’ OptoDP Active DisplayPort 1.4 Optical Cable

- Dell’s 32-inch 8K UP3218K Display Now For Sale: Check Your Wallet

- Dell Announces UP3218K: Its First 8K Display, Due in March

- CEATEC 2016: Sharp Showcases 27-inch 8K 120Hz IGZO Monitor with HDR, also 1000 PPI for VR

More...

-

09-02-17, 05:50 AM #7313

Anandtech: Lenovo Launches Yoga 920 Convertible: 13.9 4K LCD, 8th Gen Core i7, TB3,

Lenovo this week announced its new Yoga 920 convertible laptop that became more powerful due to Intel’s upcoming 8th generation Core i-series CPUs with up to four cores, better connected thanks to two Thunderbolt 3 ports, yet slimmer than its predecessor. The new model inherits most of the peculiarities of the previous-generation Lenovo Yoga 900-series notebooks and improves them in various ways.

The new Lenovo Yoga 920 is the direct successor of the Yoga 2/3 Pro, Yoga 900 and the Yoga 910 convertible laptops that Lenovo launched in 2013 – 2016. These machines are aimed at creative professionals, who need high performance, 360° watchband hinge, touchscreen, reduced weight and a long battery life. Over the years, Lenovo has changed specs and design of its hybrid Yoga-series laptops quite significantly from generation to generation in a bid to improve the machines. This time the changes are not drastic, but still rather significant both inside and outside.

The new Lenovo Yoga 920 will come with a 13.9” IPS display panel featuring very thin bezels and either 4K (3840×2160) or FHD (1920×1080) resolution, which is exactly the same panel options that are available for the Yoga 910. In the meantime, Lenovo moved the webcam from the bottom of the display bezel to its top. Besides, it reshaped the chassis slightly and sharpened its edges, making the Yoga 920 resemble Microsoft’s Surface Book. Changes in external and external design of the new Yoga vs. the predecessor enabled Lenovo to slightly reduce thickness of the PC from 14.3 to 13.95 mm (0.55”) and cut its weight from 1.38 kilograms to 1.37 kilograms (3.02 lbs).

Internal differences between the Yoga 920 and the Yoga 910 seem to be no less significant than their external designs. In addition to the new Core i 8000-series CPU (presumably a U-series SoC with up to four cores and the HD Graphics 620 iGPU), the Yoga 920 also got a new motherboard that has a different layout and feature set. The new mainboard has two Thunderbolt 3 ports (instead of two USB 2.0/3.0 Type-C headers on the model 910) for charging, connecting displays/peripherals and other things. In addition, the new mobo moves the 3.5-mm TRRS Dolby Atmos-enabled audio connector to the left side of the laptop. Speaking of audio capabilities, it is necessary to note that the Yoga 920 is equipped with two speakers co-designed with JBL as well as with far field microphones that can activate Microsoft’s Cortana from four meters away (13 feet). As for other specifications, expect the Yoga 920 to be similar to its predecessor: up to 16 GB of RAM (expect a speed bump), a PCIe SSD (with up to 1 TB capacity), a 802.11ac Wi-Fi + Bluetooth 4.1 module, a webcam, as well as a fingerprint reader compatible with Windows Hello.

The slightly thinner and lighter chassis as well as different internal components made Lenovo to reduce capacity of Yoga 920’s battery to 66 Wh from 79 Wh, according to TechRadar. When it comes to battery life, LaptopMag reports that it will remain on the same level with the previous model: 10.5 hours on one charge for the UHD model and up to 15.5 hours for the FHD SKU.

Lenovo will offer an optional Lenovo Active Pen 2 with 4,096 levels of pen sensitivity with its Yoga 920. The stylus will cost $53 and will enable people to draw or write on the touchscreen.Lenovo Yoga Specifications Yoga 900 Yoga 910

(up to)Yoga 920

(up to)Processor Intel Core i7-6500U (15W) Intel Core i7-7000 series Intel Core i7-8650U Memory 8-16GB DDR3L-1600 Up to 16 GB Graphics Intel HD 520

(24 EUs, Gen 9)Intel HD Graphics 620 Display 13.3" Glossy IPS

?16:9 QHD+ (3200x1800) LED13.9" 4K (3840 x 2160) IPS

13.9” FHD (1920x1080) IPSHard Drive(s) 256GB/512GB SSD (Samsung ?) Up to 1 TB PCIe SSD Up to 1 TB PCIe 3 x4 SSD

Samsung PM961Networking Intel Wireless AC-8260 (2x2:2 802.11ac) 2x2:2 802.11ac Audio JBL Stereo Speakers

Dolby DS 1.0

TRRS jackJBL Stereo Speakers with

Dolby Audio

TRRS jackJBL Stereo Speakers with

Dolby Atmos

TRRS jackBattery 4 cell 66Wh 79 Wh 66 Wh Buttons/Ports Power Button

2 x USB 3.0-A

1 x USB 3.0-C

Headset Jack

SD Card Reader

DC In with USB 3.0-A PortPower Button

1 x USB 3.0-A

1 x USB 3.0-C

1 x USB 2.0-C for charging

Headset JackPower Button

1 x USB 3.0-A

2 x Thunderbolt 3

Headset JackBack Side Watchband Hinge with 360° Rotation

Air Vents Integral to HingeDimensions 12.75" x 8.86" x 0.59"

324 x 225 x 14.9 mm12.72" x 8.84" x 0.56"

322 x 224.5 x 14.6 mm13.95 mm (0.55”) thick Weight 2.8 lbs (1.3 kg) 3.04 lbs (1.38 kg) 3.02 lbs (1.37 kg) Extras 720p HD Webcam

Backlit KeyboardColors Platinum Silver

Clementine Orange

Champagne GoldPlatinum Silver

Champagne Gold

GunmetalSilver

Bronze

CopperPricing $1200 (8GB/256GB)

$1300 (8GB/512GB)

$1400 (16GB/512GB)Starting from $1299 Starting from $1329

The Lenovo Yoga 920 convertible laptops will be available in silver, bronze and copper colors later this year starting from $1329 (a slight price bump over the predecessor). By contrast, the Yoga 910 came in silver, gold and dark grey (which the manufacturer called gunmetal).

Gallery: Lenovo Yoga 920

Related Reading:

- Lenovo Reveals Yoga 910 Convertible: Intel’s Kaby Lake Meets 4K Display and Ultra-Thin Form-Factor

- The Lenovo Yoga 900 Series Launched: The ‘Thinnest’ Core Laptop and a 27-inch Portable All-In-One

- Lenovo’s Yoga Book Convertible Scraps Physical Keyboard in Favor of Touch-Sensitive Surface

- Lenovo Yoga Tab 3 Plus: Snapdragon 652, 10-Inch 2K Display, JBL Speakers and USB-C

- Lenovo Updates The X1 Lineup: Thin Bezel X1 Carbon, X1 Yoga And X1 Tablet Updates

- The Lenovo ThinkPad X1 Yoga Review: OLED and LCD Tested

More...

-

09-02-17, 07:22 AM #7314

Anandtech: IFA 2017: Huawei Artificial Intelligence Keynote Live Blog (8am ET, Noon U

Huawei has a keynote at IFA this year. Having quietly announced the Kirin 970 and its new Neural Processing Unit yesterday without a word through the regular press channels, we're expecting to here Huawei's future march into AI from Richard Yu, CEO of Huawei's Consumer Business Group (CBG).

More...

-

09-02-17, 10:03 AM #7315

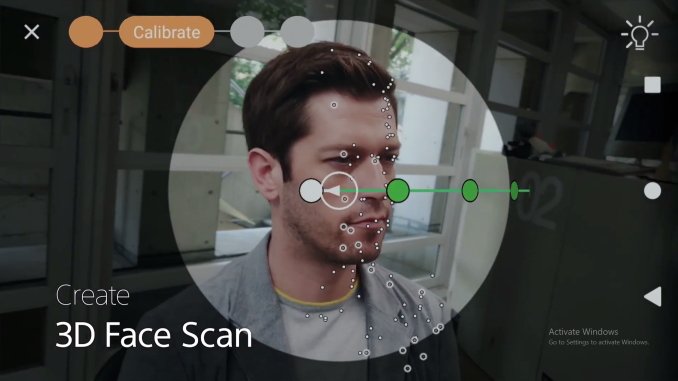

Anandtech: Generating 3D Models in Mobile: Sonys 3D Creator Made Me a Bobblehead

In a show like IFA, it’s easy to get wide-eyed about a flashy new feature that is being heavily promoted but might have limited use. Normally, something like Sony’s 3D Creator app would fall under this umbrella – a tool that can create a 3D wireframe model of someone’s head and shoulders and then implement a 4K texture over the top. What is making me write about it is some of the implementation.

Normally in a single photo, without subsequent depth map data, creating a 3D model is difficult. Also, depth data would only show points directly in front of the camera – it says nothing about what is around the corner, especially when it comes to generating a texture from the image data to fit the model. With multiple photos, by correlating points (and perhaps using internal x/y/z sensor data), distances can be measured for identical points and a full depth map can be done taking the color data from the pixels and understanding which pixel would be where in that depth map allows the wireframe model to be textured.

For anyone who follows our desktop CPU coverage, we’ve actually been running a benchmark that does this for the last few years. Our test suite runs Agisoft Photoscan, which takes a set of high-quality images (usually 50+ images) of people, of items, of buildings and of landscapes, and builds a 3D textured model to be used in displays, games, and anything that wants a 3D model. Normally this benchmark is computationally expensive: Agisoft splits the work into four segments:

- Alignment

- Point Cloud Generation

- Mesh Building

- Texture Building/Skinning

Each of these segments has dedicated algorithms and the goal here is to compute as fast as possible. Some of the algorithms are linear and rely heavily on single thread performance, whereas others, such as Mesh Building, are very parallel which Agisoft implements via OpenCL. This allows any OpenCL connected accelerator, such as a GPU, to be able to increase the performance of this test. For low core count CPUs this is usually the longest part of the full benchmark, however the higher core count parts move into other bottlenecks, such as memory or cache.

So for our Agisoft run in those benchmarks, we use a 50 image set of a building with 4K images. We get the algorithm to select 50000 points from each image, and use those for the mesh building. We typically run it in OpenCL off mode, as we are testing the CPU cores, although Ganesh has seen some minor speedup on this test with Intel’s dual-core U-series CPUs when enabling OpenCL. A high end but low power processor, such as the Core i5-7500T, takes nearly 1500 seconds, or 25 minutes to run our test. We also see speed up based on cache sizes and DRAM frequency/latency, but major parts of the app either rely on single thread performance exclusively or multithread performance exclusively.

Sony’s way of creating the 3D head model involves panning the camera from one ear to the other, and then moving the camera around the head to generate finer detail and texture information. It does this all in-situ, computing on the fly and showing the results in real time back on the screen as the scan is being done. The whole process takes a minute, which compared to the method outlined above, is super quick. Now of course, Sony’s implementation is limited to just heads, rather than something about buildings, and we were told by Sony that their models are limited to 50000 polygons. During the demonstration I was given, I could see the software generating points on the head and it was obvious the number of points was in the hundreds in total, rather than the thousands per static image, so there is a perceptible difference in quality. But the Sony modeling implementation still gives a good visual output.

The smartphones from Sony that support this feature are the XZ series, which have Snapdragon 835 SoCs inside. Qualcomm is notoriously secretive about what is under the hood on their mobile chips, although features like the Hexagon DSP contained within the chip are announced. Sony would not state how they are implementing their algorithms, if they were leveraging a compute API from the Adreno GPU, a graphics API, the Kryo CPUs, or something from the special DSPs housed on the chip. It also leads two different questions: do the algorithms work on other SoCs, or can other Snapdragon 835 smartphone vendors develop their own equivalent application?

Sony’s goal is to allow users to implement their new facial model in applications that support personal avatars, or exporting to 3D printing formats for real-world creation of a user’s head. My mind instantly pointed to who would use something like this on scale: console players, specifically on the Xbox and Nintendo devices, or for special games such as NBA2k17. Given Sony’s exists in the console space with their own Playstation 4, one might expect them not to play with competitors, although the smartphone department is a different business unit (and other Snapdragon 835 players do not have a potential conflict). I was told by the booth demonstrator that he doesn’t know of any collaboration, which is unfortunate as I’d suspect this as being a good opening for this tool.

I’m trying to probe for more information, from Sony on the algorithm or Qualcomm on the hardware, because how the algorithm is implemented on the hardware is something I find interesting given how we’ve tested desktop CPUs in the past. It also puts the challenge to other smartphone vendors that use Snapdragon 835 (or other SoCs) to see if this is a feature that they might want to implement, or if there are apps that will implement this feature regardless of hardware.

Gallery: Generating 3D Models in Mobile: Sonys 3D Creator Made Me a Bobblehead

Related Reading- Sony Launches Xperia XZ Premium and Xperia XZs Phones For US Market

- Sony Announces Xperia XZ and Xperia X Compact

- The Sony Xperia X Preview

More...

-

09-02-17, 11:30 AM #7316

Anandtech: StarTech's Thunderbolt 3 to Dual 4Kp60 Display Adapters Now Available

StarTech's new family of Thunderbolt 3 adapters that let one TB3 port to drive two 4K 60Hz displays are now available for sale. One of the adapters supports two DisplayPort 1.2 outputs, whereas another features two HDMI 2.0 headers. The devices are bus powered and do not use any kind of image compression technologies.

When Intel introduced its Thunderbolt 3 interface two years ago, the company noted that one cable can drive two daisy chained 4Kp60 displays using one TB3 cable - as TB3 can carry two complete DisplayPort 1.2 streams - greatly simplifying dual-monitor setups. The reality turned out to be more complicated. At present, there are not a lot of displays supporting Thunderbolt 3 USB Type-C input along with an appropriate output to allow daisy-chaining another monitor. Makers of monitors are reluctant to install additional chips into their products to save BOM costs and keep designs simple, essentially concealing one of the features of the TB3 interface. Meanwhile, each TB3 controller supports two DisplayPort 1.2 streams, so to drive two 4Kp60 displays, some PC makers even integrate two TB3 ports into their ultra-thin laptops to support two 4Kp60 outputs, whereas others go with four. The new adapters from StarTech solve the problem and get two DisplayPort 1.2 or HDMI 2.0 headers from a single TB3 connector.

Gallery: StarTech Thunderbolt 3 to Dual DisplayPort Adapter (TB32DP2T)

Earlier this year StarTech introduced two devices: the Thunderbolt 3 to Dual DisplayPort Adapter (TB32DP2T), and the Thunderbolt 3 to Dual HDMI 2.0 Adapter (TB32HD4K60) for customers with monitors featuring DP or HDMI inputs. StarTech does not disclose much about internal architecture of the devices, but I understand that they feature a Thunderbolt 3 controller that “receives” two DisplayPort signals from the host via TB3 and then re-routes them to either two DP outputs or two HDMI 2.0 outputs using appropriate LSPCons. Moreover, the TB3 to Dual DisplayPort adapter can even handle a single 5K monitor by using both outputs.

The new adapters are compatible with Apple macOS and Microsoft Windows-based PCs. Meanwhile, one thing to keep in mind is that the adapters do not support DP or HDMI alt modes over USB-C and they can only use TB3 ports.

The StarTech Thunderbolt 3 to Dual DisplayPort Adapter (TB32DP2T) is now available either directly from StarTech for $99.99 or from Amazon for $77.97 (a limited time offer, I suppose). Meanwhile, the Startech Thunderbolt 3 to Dual HDMI 2.0 Adapter (TB32HD4K60) can be pre-ordered from StarTech for $134.99.

Gallery: Startech Thunderbolt 3 to Dual HDMI 2.0 Adapter (TB32HD4K60)

Related Reading:

- StarTech Unveils Dual-Display Thunderbolt 2 Docking Station with 12 Ports

- AKiTiO Displays Thunderbolt 3 to 10GBase-T Adapter

- CES 2017: GIGABYTE’s Thunderbolt 3 to 8x USB 3 Dock

- StarTech Launches USB Docking Station With UHD Display Support

- StarTech.com TBT3TBTADAP Thunderbolt 3 to Thunderbolt Adapter Review

- Satechi and StarTech USB 3.1 Gen 2 Type-C HDD/SSD Enclosures Review

More...

-

09-02-17, 01:34 PM #7317

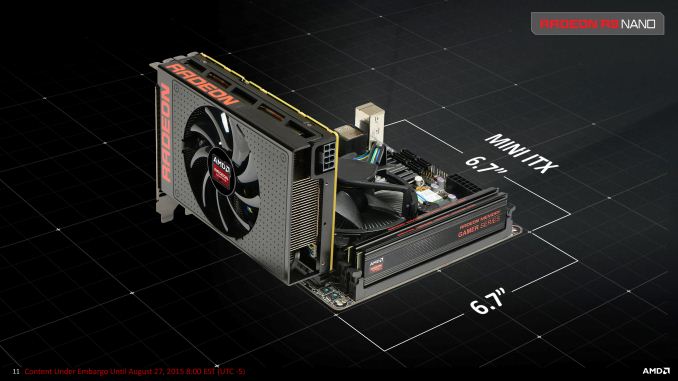

Anandtech: GIGABYTE Unveils GeForce GTX 1080 Mini ITX 8G for SFF Builds

GIGABYTE has outed their GeForce GTX 1080 Mini ITX 8G, the newest entrant in the high-performing small form factor graphics space. At only 169mm (6.7in) long, the company’s diminutive offering is now the second mITX NVIDIA GeForce GTX 1080 card, with the first being the ZOTAC GTX 1080 Mini, announced last December. While the ZOTAC card was described as “the world’s smallest GeForce GTX 1080,” the GIGABYTE GTX 1080 Mini ITX comes in ~40mm shorter, courtesy of its single-fan configuration.

Just fitting in the 17 x 17cm mITX specifications, the GIGABYTE 1080 Mini ITX features a semi-passive 90mm fan (turning off under certain loads/temperatures), triple heat pipe cooling solution, and 5+2 power phases. Despite the size, the card maintains reference clocks under Gaming Mode, with OC Mode pushing the core clocks by a modest ~2%. Powering it all is an 8pin power connector on the top of the card.

The dimensions of the GIGABYTE GTX 1080 Mini ITX actually match GIGABYTE’s previous GTX 1070 Mini ITX and 1060 Mini ITX cards, as well as their OC variants. This is in line with mid-range and high-end mITX cards generally bottoming out at ~170mm lengthwise to match the mITX form factor specification, with the exception of the petite 152mm Radeon R9 Nano, a card made even smaller due to the space-saving nature of HBM. This is a non-trivial distinction, as graphics card dimension measurements often do not include the additional length of the PCIe bracket and sometimes delineate length of the PCB rather than the cooling shroud. In any case, the 211mm long ZOTAC GTX 1080 Mini actually extends over mITX motherboards. For SFF enthusiasts, these millimeters matter.Specifications of Selected Graphics Cards for mITX PCs GIGABYTE

GeForce GTX 1080

Mini ITX 8GZOTAC

GeForce GTX 1080 MiniAMD

Radeon R9 NanoBase Clock 1607MHz (Gaming Mode)

1632MHz (OC Mode)1620MHz N/A Boost Clock 1733MHz (Gaming Mode)

1771MHz (OC Mode)1759MHz 1000MHz VRAM Clock / Type 10010MHz GDDR5X 10000MHz GDDR5X 1Gbps HBM1 Capacity 8GB 8GB 4GB Bus Width 256-bit 256-bit 4096-bit Power Undisclosed 180W (TDP) 175W (TBP) Length 169mm 211mm 152mm Height 131mm 125mm 111mm Width Dual Slot

(37mm)Dual Slot Dual Slot

(37mm)Power Connectors 1 x 8pin (top) 1 x 8pin (top) 1 x 8pin (front) Outputs 1 x HDMI 2.0b

3 x DP 1.4

1 x DL-DVI-D1 x HDMI 2.0b

3 x DP 1.4

1 x DL-DVI-D1 x HDMI 1.4

3 x DP 1.2Process TSMC 16nm TSMC 16nm TSMC 28nm Launch Price TBA ? $649

In the meantime, the GIGABYTE GTX 1080 Mini ITX will be the fastest 169mm long card. For the competition, with the R9 Nano no longer in production, the Vega-based Nano has only been teased at SIGGRAPH 2017 so far.

Details on pricing and availability have not been announced at this time.

Gallery: GIGABYTE GeForce GTX 1080 Mini ITX 8G

More...

-

09-04-17, 09:29 AM #7318

Anandtech: Playing as a Jedi: Lenovo and Disneys Star Wars Augmented Reality Experie

“The chosen one you are, with great promise I see.” Now that Disney owns the Star Wars franchise, the expansion of the universe is seemingly never ending. More films, more toys, and now more technology. We’re still a few years away from getting our own lightsabers [citation needed], but until then Disney has partnered with Lenovo to design a Star Wars experience using smartphones and augmented reality.

Lenovo is creating the hardware: a light beacon, a tracking sensor, a lightsaber controller, and the augmented reality headset designed for smartphones. The approach for Lenovo’s AR is different to how Samsung and others are approaching smartphone VR, or how Microsoft is implementing Hololens: by implementing a pre-approved smartphone into the headset, the hardware uses a four-inch diagonal portion of the screen to project an image that rebounds onto prisms and into the user’s eyes. The effect is that the user can still see ahead of them, but also images and details on the screens – limited mostly by the pixel density of the smartphone display.

Lenovo already has the hardware up for pre-order in the US ($199) and the EU (249-299 EUR), and is running a curated system of Android and iOS smartphones. This means that the smartphones have to be on Lenovo’s pre-approved list, which I suspect means that the limitation will be enforced at the Play Store level (I didn’t ask about side loading). But the headset is designed for variable sized devices.

In the two minute demo I participated in, I put on the headset and was given a lightsaber into a 10ft diameter circle, and fought Kylo Ren with my blue beam of painful light. Despite attempting harakiri in the first five seconds (to no effect), it was surprising how clear the image was without any IPD adjustment. The field of view with the headset is only 60 degrees horizontal and 30 degrees vertical, which is bigger than the Hololens and other AR headsets I have tried, but it still remains one of the biggest downsides to AR. In the demo, I had to move around and wait to counter-attack: after deflecting a blow or six from Kylo, I was given a time-slow opportunity to strike back. When waiting for him to attack, if I rushed to attack nothing seemed to happen. In typical boss-fight fashion, three successful block/hit combinations rendered me the victor – I didn’t see a health bar but this was a demo designed to encourage the user to have a positive experience.

One thing I did notice is that most of what I saw was not particularly elaborate graphically: 2D menus and a reasonable polygon model. Without the need to render the background, relying on what the user is in front of to do this job (Lenovo had it in a specific dark corner for ease of use) this is probably a walk in the park for the hardware in the headset. The lightsaber connects directly to the phone via Bluetooth, which I thought might be a little slow, but I didn’t feel any lag. The lightsaber was calibrated a bit incorrectly, but only by a few degrees. I asked about different lightsabers, such as Darth Maul’s variant, and was told that it there are possibilities in the future for different hardware, although based on what I saw it was unclear if they would implement a Wii-mote type of system with a single controller with a different skin attached. The limit at the time was that the physical lightsaber only emits a blue light for the sensor for now; it does go red, but only when there’s a low battery. Think about that next time you watch Star Wars: red saber means low batteries.

The possibilities for the AR headset could feasibly be endless. The agreement at this time is between Lenovo and Disney Interactive, so there is plenty of Disney IP that could feature in the future. Disney also likes to keep experiences on its platform locked down, so I wonder what the possibilities are for Lenovo to work with other developers and IP down the road. I was told by my Lenovo guide that it is all still very much a development in progress, with the hardware basically done and the software part going to ramp up. The current headset is given the name ‘Mirage’, and most smartphones should offer 3-4 hours of gameplay per charge.

Pre-orders are being taken now, shipments expecting to start in mid-November. US price is listed as $199.99 (without tax) and EU pricing at 299.99 EUR (with tax).Lenovo Mirage Headset Mass 470g Headset Dimensions 209 x 83 x 155 mm Headset Cameras Dual Motion Tracking Cameras Headset Buttons Select, Cancel, Menu Supported Smartphones

as of (9/4)iPhone 7 Plus

iPhone 7

iPhone 6s Plus

iPhone 6s

Samsung Galaxy S8

Samsung Galaxy S3 (?)

Google Pixel XL

Google Pixel

Moto ZLightsaber Mass 275g Lightsaber Dimensions 316 x 47 mm Package Contents Lenovo Mirage AR Headset

'Light Sword' Controller

Direction Finder

Smartphone Holder

Lightning-to-USB Cable

USB-C to USB Cable

2x AA Batteries

5V / 1A Charger and Power Supply

Related Reading

- Google I/O 2017: New AR/VR Experiences

- ASUS Announces ZenFone AR and ZenFone 3 Zoom

- Intel EOLs Atom Chip Used for Microsoft HoloLens

More...

-

09-04-17, 10:37 AM #7319

Anandtech: Huawei Mate 10 and Mate 10 Pro Launch on October 16th, More Kirin 970 Deta

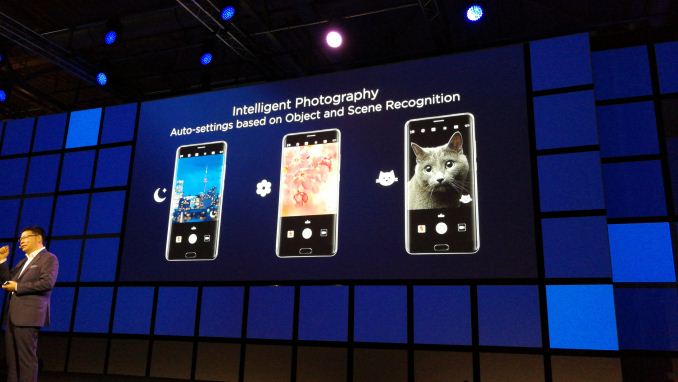

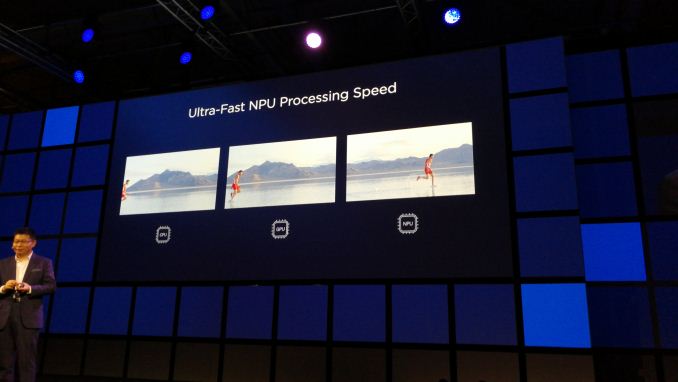

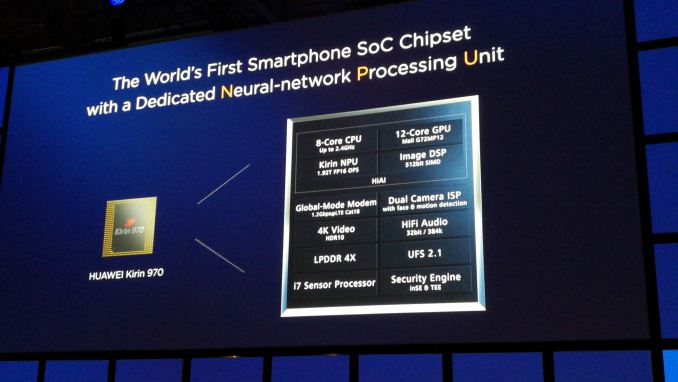

Riding on the back of the ‘not-announced then announced’ initial set of Kirin 970 details, Huawei had one of the major keynote presentations at the IFA trade show this year, detailing more of the new SoC, more into the AI details, and also providing some salient information about the next flagship phone. Richard Yu, CEO of Huawei’s Consumer Business Group (CBG), announced that the Huawei Mate 10 and Mate 10 Pro will be launched on October 16th, at an event in Munich, and will feature both the Kirin 970 SoC and a new minimal-bezel display.

Suffice to say, that is basically all we know about the Mate 10 at this point: a new display technology, and a new SoC with additional AI hardware under-the-hood to start the process of using AI to enhance the experience. When speaking with both Clement Wong, VP of Global Marketing at Huawei, and Christophe Coutelle, Director of Device Software at Huawei, it was clear that they have large, but progressive goals for the direction of AI. The initial steps demonstrated were to assist in providing the best camera settings for a scene by identifying the objects within them – a process that can be accelerated by AI and consume less power. The two from Huawei were also keen to probe the press and attendees at the show about what they thought of AI, and in particular the functions it could be applied to. One of the issues of developing hardware specifically for AI is not really the hardware itself, but the software that uses it.

The Neural Processing Unit (NPU) in the Kirin 970 is using IP from Cambricon Technology (thanks to jjj for the tip, we confirmed it). In speaking with Eric Zhou, Platform Manager for HiSilicon, we learned that the licensing for the IP is different to the licensing agreements in place with, say ARM. Huawei uses ARM core licenses for their chips, which restricts what Huawei can change in the core design: essentially you pay to use ARM’s silicon floorplan / RTL and the option is only one of placement on the die (along with voltage/frequency). With Cambricon, the agreement around the NPU IP is a more of a joint collaboration – both sides helped progress the IP beyond the paper stage with updates and enhancements all the way to final 10nm TSMC silicon.

We learned that the IP is scalable, but at this time is only going to be limited to Huawei devices. The configuration of the NPU internally is based on multiple matrix multiply units, similar to that shown in Google’s TPU and NVIDIA’s Tensor core, found in Volta. In Google’s first TPU, designed for neural network training, there was a single 256x256 matrix multiply unit doing the heavy lifting. For the TPUv2, as detailed back at the Hot Chips conference a couple of weeks ago, Google has moved to dual 128x128 matrix multiply units. In NVIDIA’s biggest Volta chip, the V100, they have placed 640 tensor cores each capable of a 4x4 matrix multiply. The Kirin 970 TPU by contrast, as we were told, uses 3x3 matrix multiply units and a number of them, although that number was not provided.

One other element to the NPU that was interesting was that its performance was quoted in terms of 16-bit floating point accuracy. When compared to the other chips listed above, Google’s TPU works best with 8-bit integer math, while Nvidia’s Tensor Core does 16-bit floating point as well. When asked, Eric stated that at this time, FP16 implementation was preferred although that might change, depending on how the hardware is used. As an initial implementation, FP16 was more inclusive of different frameworks and trained algorithms, especially as the NPU is an inference-only design.

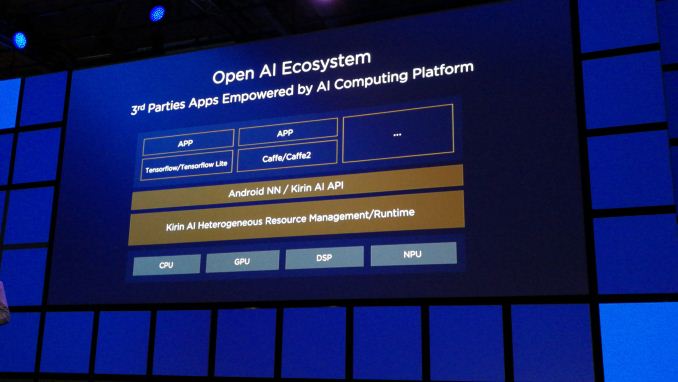

At the keynote, and confirmed in our discussions after, Huawei stated that the API to use the NPU will be available for developers. The unit as a whole will support the TensorFlow and TensorFlow Lite frameworks, as well as Caffe and Caffe2. The NPU can be accessed via Huawei’s own Kirin AI API, or Android’s NN API, relying on Kirin’s AI Heterogeneous Resource Management tools to split the workloads between CPU, GPU, DSP and NPU. I suspect we’ll understand more about this nearer to the launch. Huawei did specifically state that this will be an ‘open architecture’, but failed to mention exactly what that meant in this context.

The Kirin 970 will be available on a development board/platform for other engineers and app developers in early Q1, similar to how the Kirin 960 was also available. This will also include a community, support, dedicated tool chains and a driver development kit.

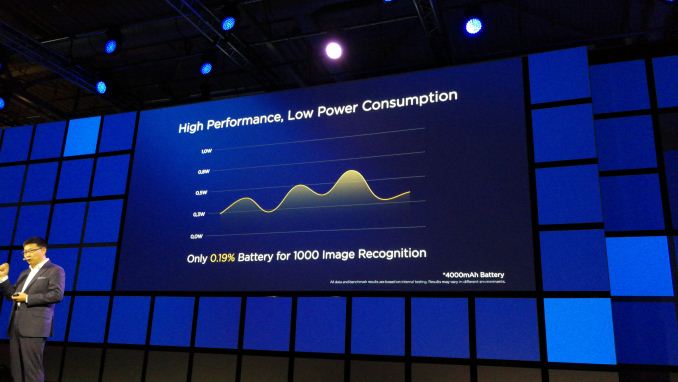

We did learn that the NPU is the size of ‘half a core’, although it was hard to tell if this was ‘half of a single core (an A73 or an A53)’ or ‘half of the cores (all the cores put together)’. We did confirm that the die size is under 100mm2, although an exact number was not provided. It does give a transistor density of 55 million transistors per square mm, which is double what we see on AMD’s Ryzen CPU (25m per mm2) on 16FF+ vs 10nm. We were told that the NPU has its own power domain, and can be both frequency gated and power gated, although during normal operation it will only be frequency gated to improve response time from idle to wake up. Power consumption was not explicitly stated (‘under 1W’), but they did quote that a test of 1000 images being recognized drained a 4000 mAh battery by 0.19%, fluctuating between 0.25W and 0.67W.

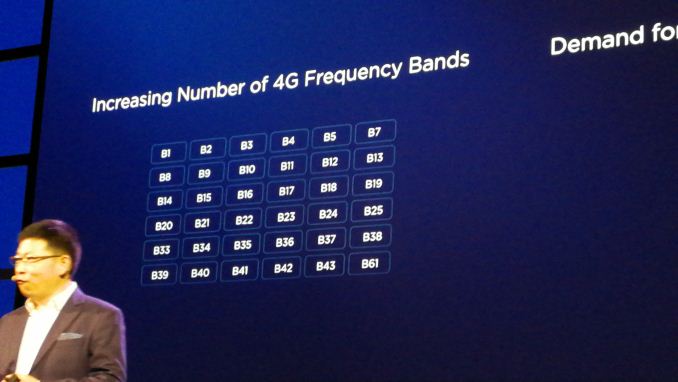

We did draw a few more specifications on the Kirin 970 out of senior management unrelated to the NPU. The display controller can support a maximum screen size of 4K, and the Kirin 970 will support two SIM cards at 4G speeds at the same time, using a time mux strategy. While the model is rated for Category 18 for downloads, giving 1.2 Gbps with 3x carrier aggregation, 4x4 MIMO and 256-QAM, the chip will do Category 13 downloads (up to 150 Mbps). The chip can handle VoLTE on both SIMs as well. Band support is substantial, given in the list below.

Audio is an odd one out here, with the onboard audio rated to 32-bit and 384 kHz (although SNR will depend on the codec). That’s about 12-15 bits higher than needed and easily multiple times the human sampling rate, but high numbers are seemingly required. The storage was confirmed as UFS 2.1, with LPDDR4X-1833 for the memory, and the use of a new i7 sensor hub.

Gallery: Huawei Mate 10 and Mate 10 Pro Launch on October 16th, More Kirin 970 DetailsHiSilicon High-End Kirin SoC Lineup SoC Kirin 970 Kirin 960 Kirin 950/955 CPU 4x A73 @ 2.40 GHz

4x A53 @ 1.80 GHz4x A73 @ 2.36GHz

4x A53 @ 1.84GHz4x A72 @ 2.30/2.52GHz

4x A53 @ 1.81GHzGPU ARM Mali-G72MP12

? MHzARM Mali-G71MP8

1037MHzARM Mali-T880MP4

900MHzLPDDR4

Memory2x 32-bit

LPDDR4 @ 1833 MHz2x 32-bit

LPDDR4 @ 1866MHz

29.9GB/s2x 32-bit

LPDDR4 @ 1333MHz 21.3GB/sInterconnect ARM CCI ARM CCI-550 ARM CCI-400 Storage UFS 2.1 UFS 2.1 eMMC 5.0 ISP/Camera Dual 14-bit ISP Dual 14-bit ISP

(Improved)Dual 14-bit ISP

940MP/sEncode/Decode 2160p60 Decode

2160p30 Encode2160p30 HEVC & H.264

Decode & Encode

2160p60 HEVC

Decode1080p H.264

Decode & Encode

2160p30 HEVC

DecodeIntegrated Modem Kirin 970 Integrated LTE

(Category 18)

DL = 1200 Mbps

3x20MHz CA, 256-QAM

UL = 150 Mbps

2x20MHz CA, 64-QAMKirin 960 Integrated LTE

(Category 12/13)

DL = 600Mbps

4x20MHz CA, 64-QAM

UL = 150Mbps

2x20MHz CA, 64-QAMBalong Integrated LTE

(Category 6)

DL = 300Mbps

2x20MHz CA, 64-QAM

UL = 50Mbps

1x20MHz CA, 16-QAMSensor Hub i7 i6 i5 NPU Yes No No Mfc. Process TSMC 10nm TSMC 16nm FFC TSMC 16nm FF+

Related Reading- Huawei Shows Unannounced Kirin 970 at IFA 2017: Dedicated Neural Processing Unit

- HiSilicon Kirin 960: A Closer Look at Performance and Power

- HiSilicon Announces New Kirin 950 SoC

More...

-

09-04-17, 01:08 PM #7320

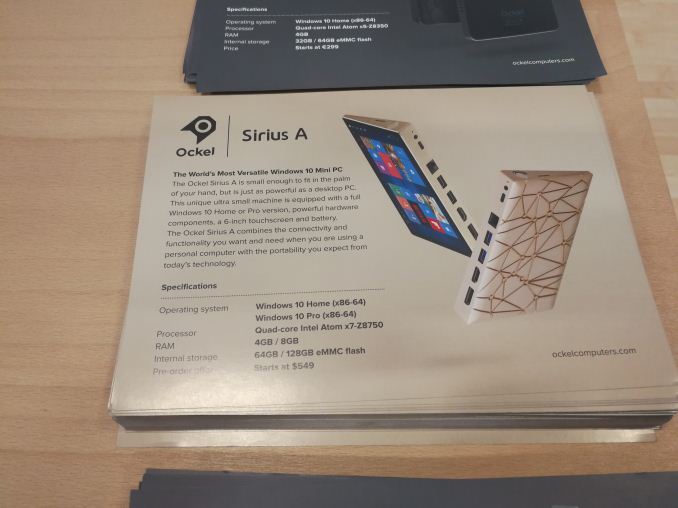

Anandtech: Ockel Sirius A is Nearing Primetime: Smartphone-Sized PC with 6-inch 1080p

Last year, at IFA 2016, I stumbled across the Ockel Sirius project. In its infancy, the device was seemingly straight forward: put a full PC into a smartphone sized chassis. At the time the project was in its early stages, and in my hands was a non-functioning mockup before the idea went to crowd funding. Normally we do not cover crowdfunding projects as a general rule, so I did not write it up at the time. But I did meet the CEO and the Product Manager, and gave a lot of feedback. I somehow bumped into them again this year while randomly walking through the halls, and they showed a working version two months from a full launch. Some of those ideas were implemented, and it looks like an interesting mash of smartphone and PC.

The Sirius A is easily as tall as, if not slightly taller than, my 6-inch smartphones, the Mate 9 and LG V30, and the requirements for PC ports means that it is also wider, particularly on one side which has two USB 3.0 ports, a HDMI 1.4 port, a DisplayPort, Gigabit Ethernet (alongside internal WiFi) and two different ways to charge, via USB Type-C or with the bundled wall adaptor. The new model was a bit heavier than the prototype from last year, namely because this one had a battery inside – an 11Wh / 3500 mAh battery, good for 3-4 hours of video consumption I was told. The weight of the prototype was around 0.7 lbs, or just over 320 grams. This is 2-2.5x a smartphone, but given that I carry two smartphones anyway, it wasn’t so much of a big jump (from my perspective).

Perhaps the reason for such a battery life number comes from the chipset: Ockel is using Intel’s Cherry Trail Atom platform here, in the Atom x7-Z8750. This is a quad-core 1.60-2.60 GHz processor, with a rated TDP of 2W. It uses Intel’s Gen8 graphics, which has native H.264 decode but only hybrid HEVC and VP9, which is likely to draw extra power. The reason for Cherry Trail is one of time and available parts – Intel has not launched a 2W equivalent processor with its new Atom cores, and also Ockel has been designing the system for over a year, meaning that parts would have had to have been locked down. That aside, they see the device more as a tool for professionals that need a full windows device but do not want to carry a laptop. With Windows 10 in play, Ockel says, the separate PC and tablet modes take care of a number of pain points with Windows touch screen interactions.

Implemented since the last discussion with them was a fingerprint sensor, for an easy unlock. Ockel are using a Goodix sensor, similar to the Huawei Matebook X and Huawei smartphones. This feature I requested just for easy access to the OS after picking the device up, rather than continually inserting a password. The power button in this case merely turns off the display, rather than putting the device into a sleep/hibernate state.

The hardware also supports dual display output, from both the HDMI and DisplayPort simultaneously, with the idea that a user can plug the device into desktop hardware when at a desk.

Ockel is set to offer two versions of the Sirius: the Sirius A and the Sirius A Pro. Both systems will have the same SoC, the same 1920x1080 IPS panel, and the same ports, differing in OS version (Win 10 Home vs Win 10 Pro), memory (4GB vs 8GB LPDDR3-1600) and storage (64GB vs 128GB eMMC). There is an additional micro-SD slot, and Ockel will be offering both versions of the device with optional 128GB micro-SD cards.

Pricing will start at $699 for the base Sirius A model (W10 Home, 4GB, 64GB), $799 for the Sirius A Pro model (W10 Pro, 8GB, 128GB), and an additional $50 for the microSD card but $150 cheaper via Indiegogo. This will come across as a lot, for what is an Atom-based Windows 10 PC in such a small form factor, when similar full-sized laptops can be had for $300-$400, depending on specifications, such as the HP Stream or Chuwi Lapbook which are strong contenders. Ultimately Ockel is going after a crowd that wants a smartphone-like sized mobile PC with a mobile like experience. There will be barriers to this, such as the lack of a direct dialling app (could use Skype), no rear facing camera, and potential battery life.Ockel Sirius Sirius A Sirius A Pro CPU Intel Atom X7-Z8700, 4C/4T,

1.6 GHz Base, 2.56 GHz Turbo

14nm, Airmont CoresGPU Intel HD Graphics 405, 12 EUs

200 MHz Base, 600 MHz TurboDRAM 4GB LPDDR3-1600 8GB LPDDR3-1600 Storage 64GB Samsung eMMC 5.0

+ microSD128GB Samsung eMMC 5.0

+ microSDDisplay 6.0-inch 1920x1080 IPS

Glossy Multi-TouchOS Windows 10 Home Windows 10 Pro USB 2 x USB 3.0

1 x USB Type-CNetworking 1 x Realtek RJ-45

1 x 802.11ac Intel AC3165

Bluetooth 4.2Display Outputs 1 x HDMI 1.4a

1 x DisplayPortAudio Realtek Audio Codec

Two Rear Speakers

Embedded Microphone

3.5mm JackSensors Fingerprint (Goodix)

Accelerometer

Gyroscope

MagnetometerCamera Front Facing, 5MP Battery Li-Po 3000 mAh (11Wh) Dimensions 85.5 x 160.0 x 8.6 to 21.4 mm

3.4 x 6.3 x 0.3 to 0.8 inchesPrice Indiegogo: $549Retail: $699 Indiegogo: $649Retail: $799

Sales are still available through Indiegogo, with mass production to start in October and shipments from November 20th. Buying it through Indiegogo also provides a set of wireless earbuds. We’ve been offered a review sample – I’m unsure at this point if we should get Ganesh to review it as a mini-PC, Brett to review it as a laptop, or someone else to review it as a smartphone.

Related Reading- The Chuwi LapBook 14.1 Review: Redefining Affordable

- HP Stream 11 Review: A New Take On Low Cost Computing

- Zotac Updates ZBOX mini-PCs with Kaby Lake: vPro, Thunderbolt, and More

More...

Thread Information

Users Browsing this Thread

There are currently 24 users browsing this thread. (0 members and 24 guests)

Quote

Quote

Bookmarks