Results 9,001 to 9,010 of 12096

Thread: Anandtech News

-

01-06-19, 11:31 PM #9001

Anandtech: Samsung Announces iTunes Movie & TV Show Support In Its 2018, 2019 Smart T

Samsung has announced that 2018 and 2019 Smart TVs will offer Apple iTunes Movies and TV Show support, along with support for Apple’s AirPlay 2. The surprise announcement made today has wider implications to Apple’s content service as well as future plans for the Apple TV hardware.

The new “iTunes Movies and TV Shows” Smart TV application will be available on all new 2019 TV models from Samsung beginning this spring, as well as seeing support for 2018 models via firmware updates.

The adoption of the iTunes library of movies and TV shows gives Samsung TV users a new content delivery source, but most importantly, it gives Apple unprecedented access to a userbase of potential new customers for its service. This also represents the first time that Apple allows access to its service from a non-Apple device, essentially bypassing the need for a customer to buy Apple hardware such as the Apple TV.

There are still some open and unanswered technical questions on how the implementation will work – for example the fact that Samsung TVs do not support Dolby Vision is certainly an aspect that will in some way or other affect the way HDR content will be delivered and played back.

Given that the announcement is made by press release by Samsung, it seems that the deal has some sort exclusivity – leaving out other Smart TV vendors for the time being.

More...

-

01-07-19, 12:30 AM #9002

Anandtech: Dell at CES 2019: Alienware m15 Gets Core i9, GeForce RTX, & 4K HDR400 Dis

Released last October, Dell's Alienware m15 laptop was the brand’s first attempt to address the growing market for stylish and portable gaming laptops. The 15.6-inch machine indeed looks very impressive and with new CPU, GPU, and display upgrades that Dell is going to offer, the notebook is set to get even faster and should offer performance comparable to that of small form-factor gaming desktops.

Starting late January, Dell will offer updated versions of its Alienware m15 notebooks that powered by up to Intel’s latest mobile CPUs – including its six-core Core i9-8950HK CPUs – and accompanied by NVIDIA’s GeForce RTX 2060, RTX 2070 Max-Q, or 2080 Max-Q graphics processors. There will also be entry level configurations equipped with NVIDIA’s GeForce GTX 1050 Ti, but it remains to be seen how widespread such configs will be.

Besides the new CPU and GPU options, the updated Alieware m15 will offer slightly different storage subsystems. The upcoming models will come with up to a 1 TB PCIe SSD in a single-drive builds (up from 256 GB SATA SSDs today) as well as up to two 1 TB PCIe SSDs in dual-drive configurations (up from 1 TB PCIe SSD + 1 TB HDD today).

On the display side of things, top-of-the-range Alienware m15 models will come with a 4K HDR 400-rated display panel, which will be able to hit up to 500 nits brightness in HDR mode. Dell will continue to offer Full-HD and Ultra-HD IPS LCDs with cheaper models, as well as Full-HD 144 Hz TN panels to hardcore gamers seeking the highest refresh rates.

The new Alienware m15 will retain the current Epic Silver and Nebula Red chassis designs, outfitted with the AlienFX RGB lighting. The laptops are 20.99 mm (0.8264 inch) thick and weigh 2.16 kg (4.76 lbs). The upcoming laptops will also keep using a 60 Wh battery that will be installed by default and is rated for 7.1 hours of video playback, with an optional 90 Wh upgrade for build-to-order configurations (obviously, such builds will weigh more than 2.16 kilograms).

The new Alienware m15 laptops will hit the market on January 29, 2019. Prices will start at $1,580. The entry level config is said to use the “latest NVIDIA graphics”, presumably the GeForce RTX 2060 since the GeForce GTX 1050 Ti belongs to the previous generation.

Related Reading:General Specifications of Dell's 2019 Alienware m15 Alienware m15

1080p 60 HzAlienware m15

1080p 144 HzAlienware m15

4K UHDAlienware m15

4K HDR400Display Size 15.6" Type IPS TN IPS Resolution 1920×1080 3840×2160 Brightness 300 cd/m² ? 400 cd/m² 500 cd/m² Color Gamut 72% NTSC (?) ? ~100% sRGB ~100% sRGB (?) Refresh Rate 60 Hz 144 Hz 60 Hz CPU Intel Core i5-8300H - 4C/8T, 2.3 - 4 GHz, 8 MB cache, 45 W

Intel Core i7-8750H - 6C/12T, 2.2 - 4.1 GHz, 9 MB cache, 45 W

Intel Core i9-8950HK - 6C/12T, 2.9 - 4.5 GHz, 12 MB cache, 45 WGraphics Integrated UHD Graphics 620 (24 EUs) Discrete NVIDIA GeForce GTX 1050 Ti with 4 GB GDDR5

NVIDIA GeForce RTX 2060 with 6 GB GDDR6

NVIDIA GeForce RTX 2070 Max-Q with 6 GB GDDR6

NVIDIA GeForce RTX 2080 Max-Q with 8 GB GDDR6RAM 8 GB single-channel DDR4-2667

16 GB dual-channel DDR4-2667

32 GB dual-channel DDR4-2667Storage Single Drive 256 GB PCIe M.2 SSD

512 GB PCIe M.2 SSD

1 TB PCIe M.2 SSD

1 TB HDD with 8 GB NAND cacheDual Drive 128 GB PCIe M.2 SSD + 1 TB (+8 GB SSHD) Hybrid Drive

256 GB PCIe M.2 SSD + 1 TB (+8 GB SSHD) Hybrid Drive

512 GB PCIe M.2 SSD + 1 TB (+8 GB SSHD) Hybrid Drive

1 TB PCIe M.2 SSD + 1 TB (+8 GB SSHD) Hybrid Drive

118 GB Intel Optane SSD + 1 TB (+8 GB SSHD) Hybrid Drive

256 GB PCIe M.2 SSD + 256 GB PCIe M.2 SSD

512 GB PCIe M.2 SSD + 512 GB PCIe M.2 SSD

1 TB PCIe M.2 SSD + 1 TB PCIe M.2 SSDWi-Fi + Bluetooth Default Qualcomm QCA6174A 802.11ac 2x2 MU-MIMO Wi-Fi and Bluetooth 4.2 Optional Killer Wireless 1550 2x2 802.11ac and Bluetooth 5.0 Thunderbolt 1 × USB Type-C TB3 portUSB 3 × USB 3.1 Gen 1 Type-ADisplay Outputs 1 × Mini DisplayPort 1.3

1×HDMI 2.0GbE Killer E2500 GbE controller Webcam 1080p webcam Other I/O Microphone, stereo speakers, TRRS audio jack, trackpad, Alienware Graphics Amplifier port, etc. Battery Default 60 Wh Optional 90 Wh Dimensions Thickness 20.99 mm | 0.8264 inch Width 362.86 mm | 14.286 inch Depth 275 mm | 10.8 inch Weight (average) 2.16 kilograms | 4.76 lbs Operating System Windows 10 or Windows 10 Pro

- Alienware Rolls Out Thin & Powerful m15 Laptop: Coffee Lake & GTX 1070 Plus 4K

- Origin PC Unveils EON15-S Laptop: Core i9, GTX 1060, Two PCIe SSDs

- Lenovo's Halo: The ThinkPad X1 Extreme Announced

- Dell's Spring Range: New 8th Gen Alienware, Laptops, and Monitors

- Alienware 13 R3: Quad-Core CPU, GeForce GTX 1060, QHD OLED, VR Ready

- Alienware Refreshes The Alienware 15 And 17 Gaming Notebooks At PAX

Source: Dell

More...

-

01-07-19, 12:30 AM #9003

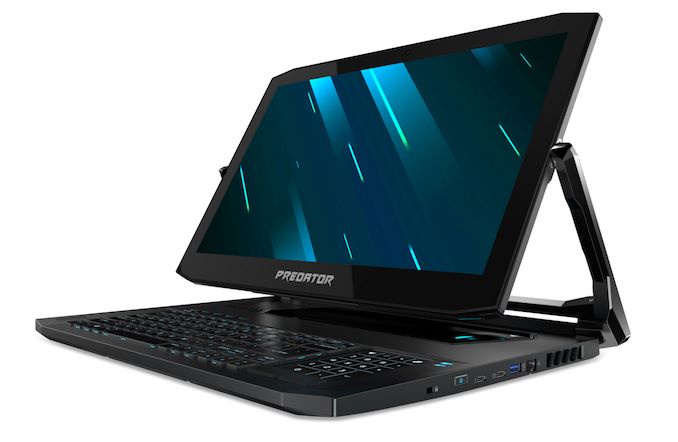

Anandtech: Acer at CES 2019: Predator Triton Gaming Laptops With RTX GPUs

Today at CES, Acer has launched two new gaming laptops under their Predator branding with the Predator Triton 900, and Predator Triton 500. Both feature the just announced NVIDIA GeForce RTX GPUs, but the Predator Triton 900 also brings some very unique features for a gaming laptop.

Acer Predator Triton 900

Let’s take a look at the Predator Triton 900 first, since it’s such a unique idea in the gaming laptop space. This 17-inch notebook features the same Ezel hinge system we first saw way back with the Acer Aspire R13, but seeing this type of convertible design in a gaming laptop brings some unique advantages not really seen in the Ultrabook form factor. The first advantage would be if the Triton 900 was to be used as a desktop replacement device. The screen could be rotated around to be used with an external keyboard and mouse, but allowing the user to still utilize the 17.3-inch 3840x2160 G-SYNC display. It would also be a powerful platform for any sort of touch gaming, or an insanely powerful tablet replacement. I think it’s a pretty smart way to increase the usability of a large form factor gaming laptop.

The Predator Triton 900 is well-outfitted too. It features the hex-core Intel Core i7-8750H processor, with six cores, twelve threads, and a frequency of 2.2 to 4.1 GHz in a 45-Watt envelope. Acer couples this with the just announced laptop form-factor NVIDIA GeForce RTX 2080, with 8 GB of GDDR6. System memory is up to 32 GB of DDR3. Storage is 2 x 512 GB SSDs in RAID 0, which is of course silly but seems to still be a thing in the gaming market.

Interestingly, despite the high-performance components inside, the Predator Triton 900 is just a hair under 1-inch thick, at 0.94-inches.

Other gaming features include a built-in Xbox wireless receiver, allowing the laptop to connect to Xbox controllers over the faster Wi-Fi Direct, rather than Bluetooth.

We’ve seen other fast gaming laptops with the keyboard forward idea before, but the convertible design of this Acer laptop is very interesting indeed. Availability will be March for $3999.

Acer Predator Triton 500Acer Predator Lineup CES 2019 Component Triton 900 CPU Intel Core i7-8750H

6C/12T 2.2-4.1 GHz

45W TDP 9MB CacheGPU NVIDIA GeForce RTX 2080

8GB GDDR6RAM up to 32 GB DDR4 Storage up to 2 x 512 GB NVMe PCIe Display 17.3-inch 3840x2016 IPS with G-SYNC Thickness 0.94-inches Weight a bit Starting Price $3,999

Although the bigger 900 series is likely going to get more press, the 15.6-inch Predator Triton 500 is no slouch either. Although it’s a more traditional design, it features the same CPU in the Intel Core i7-8750H, and can be had with either the GeForce RTX 2060, or the RTX 2080 Max-Q. Both GPUs support overclocking as well.

The display choices are all 1920x1080 IPS panels, but the 15.6-inch panels support 144 Hz refresh rates, and the models with the RTX 2080 Max-Q also feature G-SYNC. Although it can’t match the thin-bezel design of the latest Ultrabooks, the Triton 500 does offer 81% screen-to-body, which slims down the dimensions nicely.

As with the higher tier Triton 900, the Triton 500 also offers up to 32 GB of DDR4, and up to 1 TB of PCIe SSDs in a 2 x 512 GB RAID 0 configuration.

Interestingly, Acer is claiming up to 8 hours of battery life from the Triton 500, which would be a big jump over most gaming laptops, although of course that will not be 8 hours of gaming.

Both of these models look like nice updates in the Predator lineup. The Predator Triton 500 will be available in February starting at $1799.

Source: AcerAcer Predator Triton 500 Component PT515-51-71VV PT515-75L8 PT515-51-765U CPU Intel Core i7-8750H

6C/12T 2.2-4.1 GHz

45W TDP 9MB CacheGPU NVIDIA GeForce RTX 2060

6GB GDDR6

OverclockableNVIDIA GeForce RTX 2080 Max-Q

8GB GDDR6

OverclockableRAM 16 GB DDR4 16 GB DDR4 32 GB DDR4 Storage 512 GB NVMe PCIe 512 GB NVMe PCIe 2 x 512 GB NVMe PCIe Display 15.6-inch 1920x1080 IPS

144 Hz Refresh Rate

3ms response15.6-inch 1920x1080 IPS

144 Hz Refresh Rate

3ms response

G-SYNCThickness 0.70 inches Weight 4.6 lbs Starting Price $1,799.99 $2,499.99 $2,999.99

More...

-

01-07-19, 01:31 AM #9004

Anandtech: NVIDIA Announces GeForce RTX 2060: Starting At $349, Available January 15t

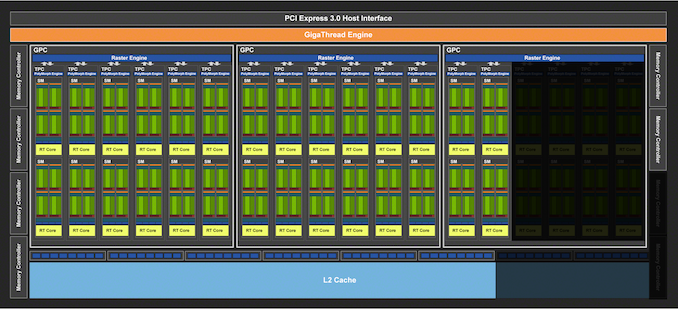

Kicking off CES 2019 with a surprisingly announcement-packed keynote session, NVIDIA this evening has announced the next member of the GeForce RTX video card family: the GeForce RTX 2060. The newest and now cheapest member of the RTX 20 series continues the cascade of Turing-architecture product releases towards cheaper and higher volume market segments. Designed to offer performance around the outgoing GeForce GTX 1070 Ti, the new card will hit the streets next week on January 15th, with prices starting at $349.

We don’t yet have top-to-bottom specifications for the card, but based on the information NVIDIA has released thus far, it looks like the GeForce RTX 2060 is based on a cut-down version of the TU106 GPU that’s already being used in the GeForce RTX 2070. This is notable because until now, NVIDIA has used a different GPU for each RTX card – TU102/2080TI, TU104/2080, TU106/2070 – making this the first such card in the family. It’s also a bit of a shift from the status quo for GeForce xx60 parts in general, which have traditionally always featured their own GPU, with NVIDIA going smaller to reduce costs.

In any case, let’s dive into the numbers. The GeForce RTX 2060 sports 1920 CUDA cores, meaning we’re looking at a 30 SM configuration, versus RTX 2070’s 36 SMs. As the core architecture of Turing is designed to scale with the number of SMs, this means that all of the core compute features are being scaled down similarly, so the 17% drop in SMs means a 17% drop in the RT Core count, a 17% drop in the tensor core count, a 17% drop in the texture unit count, a 17% drop in L0/L1 caches, etc.NVIDIA GeForce Specification Comparison RTX 2060 Founders Edition GTX 1060 6GB GTX 1070 RTX 2070 CUDA Cores 1920 1280 1920 2304 ROPs 48? 48 64 64 Core Clock 1365MHz 1506MHz 1506MHz 1410MHz Boost Clock 1680MHz 1709MHz 1683MHz 1620MHz

FE: 1710MHzMemory Clock 14Gbps GDDR6 8Gbps GDDR5 8Gbps GDDR5 14Gbps GDDR6 Memory Bus Width 192-bit 192-bit 192-bit 256-bit VRAM 6GB 6GB 8GB 8GB Single Precision Perf. 6.5 TFLOPS 4.4 TFLOPs 6.5 TFLOPS 7.5 TFLOPs

FE: 7.9 TFLOPS"RTX-OPS" 37T N/A N/A 45T SLI Support No No Yes No TDP 160W 120W 150W 175W

FE: 185WGPU TU106? GP106 GP104 TU106 Architecture Turing Pascal Pascal Turing Manufacturing Process TSMC 12nm "FFN" TSMC 16nm TSMC 16nm TSMC 12nm "FFN" Launch Date 1/15/2019 7/19/2016 6/10/2016 10/17/2018 Launch Price $349 MSRP: $249

FE: $299MSRP: $379

FE: $449MSRP: $499

FE: $599

Unsurprisingly, clockspeeds are going to be very close to NVIDIA’s other TU106 card, RTX 2070. The base clockspeed is down a bit to 1365MHz, but the boost clock is up a bit to 1680MHz. So on the whole, RTX 2060 is poised to deliver around 87% of the RTX 2070’s compute/RT/texture performance, which is an uncharacteristically small gap between a xx70 card and an xx60 card. In other words, the RTX 2060 is in a good position to punch above its weight in compute/shading performance.

However TU106 has taken a bigger trim on the backend, and in workloads that aren’t pure compute, the drop will be a bit harder. The card is shipping with just 6GB of GDDR6 VRAM, as opposed to 8GB on its bigger brother. The result of this is that NVIDIA is not populating 2 of TU106’s 8 memory controllers, resulting in a 192-bit memory bus and meaning that with the use of 14Gbps GDDR6, RTX 2060 only offers 75% of the memory bandwidth of the RTX 2070. Or to put this in numbers, the RTX 2060 will offer 336GB/sec of bandwidth to the RTX 2070’s 448GB/sec.

And since the memory controllers, ROPs, and L2 cache are all tied together very closely in NVIDIA’s architecture, this means that ROP throughput and the amount of L2 cache are also being shaved by 25%. So for graphics workloads the practical performance drop is going to be greater than the 13% mark for compute throughput, but also generally less than the 25% mark for ROP/memory throughput.

I also have some specific concerns here about the inclusion of just 6GB of VRAM – especially in an era where game consoles are shipping with 8 to 12GB of VRAM – but this is something we can look at later with the eventual review.

Moving on, NVIDIA is rating the RTX 2060 for a TDP of 160W. This is down from the RTX 2070, but only slightly, as those cards are rated for 175W. Cut-down GPUs have limited options for reducing their power consumption, so it’s not unusual to see a card like this rated to draw almost as much power as its full-fledged counterpart.

Past that, looking at NVIDIA’s specifications there are no feature differences between the RTX 2060 and RTX 2070. The latter for example already lacked SLI support, so there’s nothing to take away here. Other than being slower and cheaper than its bigger sibling, the RTX 2060 offers all the features we’ve come to expect from the Turing architecture family.

In terms of card design, next week’s launch is going to be a simultaneous reference and custom card release. NVIDIA will be releasing a Founder’s Edition card with their usual stylings – and in the pictures NVIDIA has released, it looks exactly like the RTX 2070 – while board partners have already worked with the TU106 GPU for a few months now thanks to RTX 2070, and have used the time to gain the experience needed to design their own boards. Like the custom RTX 2070 boards that have since launched, expect these cards to run the gamut from petite, mITX-sized cards with a single fan to large, tri-fan monsters.

Hardware aside, while NVIDIA is calling this an xx60 class card, the price tag and general power requirements for the RTX 2060 make it feel like it’s out of place. The xx60 series has traditionally been NVIDIA’s mainstream cards; and up until the launch of the GTX 1060 6GB, these were typically around $200. GTX 1060 6GB went to the high end of this scale at $249 for custom cards (and a whopping $299 for the Founders Edition), however the $349 RTX 2060 is now well outside of the mainstream sweet spot for pricing. With 3 other GeForce cards above it, it may not be high-end, but it’s definition an enthusiast card.

This also means that performance comparisons to the GTX 1060 feel similarly out of place. With 1920 CUDA cores the RTX 2060 is going to be significantly faster than the GTX 1060, but it also costs $100 more and draws 30W more power. So if anything, this feels like the new GTX 1070 (original MSRP $379) than it does the new GTX 1060. We’ll have to see what real-world performance is like when we get to review the new card, but thus far it looks like NVIDIA is going to be keeping their general price/performance curve for the RTX 20 series, meaning that in terms of performance in current games, the card is only going to be a mild improvement over the GeForce GTX 10 series card it replaces at this price tier.

Though even if the performance improvement is mild, it will significantly alter the competitive landscape. If NVIDIA’s GTX 1070 Ti-like performance claims are valid, then it’s going to undermine AMD’s Vega 56/64 cards, and the company will need to respond if they want to keep holding a piece of this market.

All told then, the value proposition argument for the RTX 2060 looks to be very similar to the rest of the RTX 20 series: NVIDIA is betting consumers will be willing to pay a premium for the Turing architecture’s next-generation features – mainly ray tracing acceleration and the various applications of the tensor cores. Which is why NVIDIA needs to continue to promote these features, bring developers on board, and sell the image quality improvements in general. Still, even NVIDIA seems to realize that this isn’t going to be easy, which is why they’re also launching a new GeForce game bundle program that will include the new RTX 2060, where buyers can get a free copy of either Battlefield V or Anthem.

The GeForce RTX 2060 will be hitting retail shelves next week on January 15th, with prices starting at $349. And we intend to take a look at this new card very soon, so please stay tuned.

More...

-

01-07-19, 01:31 AM #9005

Anandtech: D-Link at CES 2019: Mesh-Enabled Exo Routers and Extenders with McAfee Sec

D-Link introduced their Exo series of routers in early 2016. Since then, the traditional router form factor with middle-of-the-road specifications has become a mid-range product for all networking product vendors. In order to stand out in this competitive market segment, D-Link is announcing a new lineup of 802.11ac Exo routers and extenders with mesh networking support. The products are being made more attractive with the bundling of a McAfee security suite.

D-Link is also bringing in elements found commonly in the whole-home Wi-Fi system market (such as easier plug-and-play setup, and seamless addition of endpoints as requirements evolve over the deployment time period) into the Exo lineup. All routers and endpoints include a gigabit wired port.

The McAfee security suite adopts a cloud-based approach, and threats identified in one deployment are automatically recognized and passed on to other deployments for better security. In keeping with the current marketing buzzwords, D-Link and McAfee are using the 'cloud-based machine learning' moniker to advertise this real-time threat detection and database update approach. The security suite also includes a host of commonly requested features like parental controls and IoT device protection.

The Exo routers come with Google Assistant and Alexa support. It also includes a security suite with 2 years free subscription to McAfee anti-virus for every device in the network and a 5 year subscription for the IoT device protection scheme. Combined together, these services represent a $700 value according to D-Link - this makes the Exo lineup a very attractive proposition in its market segment. Other networking vendors also have similar tie-ups for the security aspect, but, we have not seen anything approach the value of the D-Link / McAfee combination yet.

The Exo lineup will be available for purchase in Q2 2019, and includes the following products:

- AC3000 Mesh-Enabled Smart Wi-Fi Router, $199.99

- AC2600 Mesh-Enabled Smart Wi-Fi Router, $179.99

- AC1900 Mesh-Enabled Smart Wi-Fi Router, $159.99

- AC1750 Mesh-Enabled Smart Wi-Fi Router, $119.99

- AC1300 Mesh-Enabled Smart Wi-Fi Router, $79.99

- AC2000 Mesh-Enabled Wi-Fi Extender, $99.99

- AC1300 Mesh-Enabled Wi-Fi Extender, $79.99

Additional details about each product are available in the gallery below.

More...

- AC3000 Mesh-Enabled Smart Wi-Fi Router, $199.99

-

01-07-19, 02:48 AM #9006

Anandtech: NVIDIA To Officially Support VESA Adaptive Sync (FreeSync) Under G-Sync C

The history of variable refresh gaming displays is longer than there is time available to write it up at CES. But in short, while NVIDIA has enjoyed a first-mover’s advantage with G-Sync when they launched it in 2013, the ecosystem of variable refresh monitors has grown rapidly in the last half-decade. The big reason for that is that the VESA, the standards body responsible for DisplayPort, added variable refresh as an optional part of the specification, creating a standardized and royalty-free means of enabling variable refresh displays. However to date, this VESA Adaptive Sync standard has only been supported on the video card side of matters by AMD, who advertises it under their FreeSync branding. Now however – and in many people’s eyes at last – NVIDIA is going to be jumping into the game and supporting VESA Adaptive Sync on GeForce cards, allowing gamers access to a much wider array of variable refresh monitors.

There are multiple facets here to NVIDIA’s efforts, so it’s probably best to start with the technology aspects and then relate that to NVIDIA’s new branding and testing initiatives. Though they don’t discuss it, NVIDIA has internally supported VESA Adaptive Sync for a couple of years now; rather than putting G-Sync modules in laptops, they’ve used what’s essentially a form of Adaptive Sync to enable “G-Sync” on laptops. As a result we’ve known for some time now that NVIDIA could support VESA Adaptive Sync if they wanted to, however until now they haven’t done this.

Coming next week, this is changing. On January 15th, NVIDIA will be releasing a new driver that enables VESA Adaptive Sync support on GeForce GTX 10 and GeForce RTX 20 series (i.e. Pascal and newer) cards. There will be a bit of gatekeeping involved on NVIDIA’s part – it won’t be enabled automatically for most monitors – but the option will be there to enable variable refresh (or at least try to enable it) for all VESA Adaptive Sync monitors. If a monitor supports the technology – be it labeled VESA Adaptive Sync or AMD FreeSync – then NVIDIA’s cards can finally take advantage of their variable refresh features. Full stop.

At this point there are some remaining questions on the matter – in particular whether they’re going to do anything to enable this over HDMI as well or just DisplayPort – and we’ll be tracking down answers to those questions. Past that, the fact that NVIDIA already has experience with VESA Adaptive Sync in their G-Sync laptops is a promising sign, as it means they won’t be starting from scratch on supporting variable refresh on monitors without their custom G-Sync modules. Still, a lot of eyes are going to be watching NVIDIA and looking at just how well this works in practice once those drivers roll out next week.

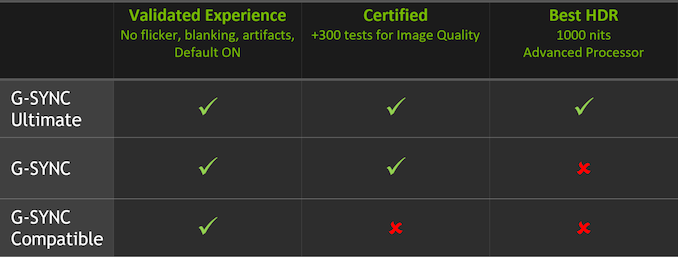

G-Sync Compatible Branding

Past the base technology aspects, as is often the case with NVIDIA there are the branding aspects. NVIDIA has held since the first Adaptive Sync monitors were released that G-Sync delivers a better experience – and admittedly they have often been right. The G-Sync program has always had a validation/quality control aspect to it that the open VESA Adaptive Sync standard inherently lacks, which over the years has led to a wide range in monitor quality among Adaptive Sync displays. Great monitors would look fantastic and behave correctly to deliver the best experience, while poorer monitors would have quirks like narrow variable refresh ranges or pixel overdrive issues, greatly limiting the actual usefulness of their variable refresh rate features.

Looking to exert some influence and quality control over the VESA Adaptive Sync ecosystem, NVIDIA’s solution to this problem is that they are establishing a G-Sync Compatible certification program for these monitors. In short NVIDIA will be testing every Adaptive Sync monitor they can get their hands on, and monitors that pass NVIDIA’s tests will be G-Sync Compatible certified.

Right now NVIDIA isn’t saying much about what their compatibility testing entails. Beyond the obvious items – the monitor works and doesn’t suffer obvious image quality issues like dropping frames – it’s not clear whether this certification process will also involve refresh rate ranges, pixel overdrive features, or other quality-of-life aspects of variable refresh technology. Or for that matter whether there will be pixel response time requirements, color space requirements, etc. (It is noteworthy that of the monitors approved so far, none of them are listed as supporting variable overdrive)

At any rate, NVIDIA says they have tested over 400 monitors so far, and of those monitors 12 will be making their initial compatibility list. Which is a rather low pass rate – and indicating that NVIDIA’s standards aren’t going to be very loose here – but it still covers a number of popular monitors from Acer, ASUS, Agon, AOC, and bringing up the rest of the alphabet, BenQ.

As for what G-Sync Compatibility gets gamers and manufacturers, the big advantage is that officially compatible monitors will have their variable refresh features enabled automatically by NVIDIA’s drivers, similar to how they handle standard G-Sync monitors. So while all VESA Adaptive Sync monitors can be used with NVIDIA’s cards, only officially compatible monitors will have this enabled by default. It is, if nothing else, a small carrot to both consumers and manufacturers to build and buy monitors that meet NVIDIA’s functionality requirements.

Meanwhile on the business side of matters, the big wildcard that remains is whether NVIDIA is going to try to monetize the G-Sync Compatible program in any way, as the company has traditionally done this for value-added features. For example, will manufacturers also need to pay NVIDIA to have their monitors officially flagged as compatible? After all, official compatibility is not a requirement to be used with NVIDIA’s cards, it’s merely a perk. And meanwhile supporting VESA Adaptive Sync monitors is likely to hurt NVIDIA’s G-Sync module revenues.

If nothing else, I fully expect that NVIDIA will charge manufacturers to use the G-Sync branding in promotional materials and on product boxes, as NVIDIA owns their branding. But I’m curious whether certification itself will also be something the company charges for.

G-Sync HDR Becomes G-Sync Ultimate

Finally, along with the G-Sync Compatible branding, NVIDIA is also rolling out a new branding initiative for HDR-capable G-Sync monitors. These monitors, which until now have informally been referred to at G-Sync HDR monitors, will now go under the G-Sync Ultimate branding.

In practice, very little is changing here besides establishing an official brand name for the recent (and forthcoming) crop of HDR-capable G-Sync monitors, all of which has been co-developed with NVIDIA anyhow. So this means all Ultimate monitors will need to support HDR with high refresh rates and 1000nits+ peak brightness, use a full array local dimming backlight, support the P3 D65 color space, etc. Given that it’s likely only a matter of time until G-Sync capable monitors with lesser HDR features hit the market, it’s a good move for NVIDIA to establish a well-defined brand and quality requirements now, so that a G-Sync monitor being HDR-capable isn’t confused with the recent high-end monitors that can actually approach a proper HDR experience.

Gallery: NVIDIA To Officially Support VESA Adaptive Sync (FreeSync) Under G-Sync Compatible Branding

More...

-

01-07-19, 03:47 AM #9007

Anandtech: Seagate at CES 2019: LaCie Mobile Drive and SSD External Storage Solutions

Seagate has made it customary to launch a few external storage solutions at CES each year. This time around, the LaCie brand is getting a couple of newly designed all-aluminium enclosures. The two have an 'eye-catching diamond-cut' design, and complement the look and feel of the current Apple notebooks (LaCie's primary target market).

The LaCie Mobile Drive (external hard-drive) comes in capacities ranging from 2TB (10mm thick) to 5TB (20mm thick), while the LaCie Mobile SSD (external SSD) comes in capacities up to 2TB. Both have a USB 3.1 Gen 2 Type-C interface, and come with the LaCie Toolkit software (for backup / mirroring purposes that external drives are commonly used for).

The Mobile Drive is a capacity play (up to 5TB capacity), while the Mobile SSD is a performance one (with speeds of up to 540 MBps). Both products include a 1-month subscription to the Adobe Creative Cloud App Apps plan. The LaCie Mobile Drive will be available in January and comes with a 2-year warranty. The Mobile SSD comes with a 3-year warranty as well as a 3-year subscription to the Seagate Rescue Data Recovery plan. Pricing and exact details of the retail availability are yet to be disclosed.

In addition to the LaCie products, Seagate's Backup Plus family is getting the new Ultra Touch lineup in 1TB and 2TB capacities with a woven textile enclosure. This product line comes with features such as automatic backup with multi-device folder sync and data protection with hardware encryption. The 1TB version is priced at $70 and and the 2TB at $90.

The Backup Plus Slim (1TB and 2TB capacities) and Backup Plus Portable (4TB and 5TB capacities) now come with lustrous aluminum finishes The new Backup Plus models include a complimentary 2-month subscription to the Adobe Creative Cloud Photography Plan. The products are expected to be available for purchase later this quarter.

Gallery: Seagate at CES 2019: LaCie Mobile Drive and SSD External Storage Solutions

More...

-

-

01-07-19, 11:00 AM #9009

Anandtech: CES 2019: Huawei Launches the Matebook 13

Today one of the best notebooks I’ve ever tested is getting an update: Huawei’s new Matebook 13 is the generational update to the Matebook X. In it we get the latest generation of Whiskey-Lake U processors, the same 2160x1440 3:2 display, an optional MX150 variant, and a new cooling implementation based on a shark fin design.

More...

-

01-07-19, 02:15 PM #9010

Anandtech: Netgear Orbi Whole-Home Wi-Fi System to Adopt Qualcomm's Wi-Fi 6 802.11ax

Netgear's Orbi Wi-Fi system / mesh networking product line has been well-received in the market since its introduction in Q3 2016. Since then, Netgear has been regularly rolling out new hardware and firmware upgrades to keep up with the market requirements. All the Orbi products in the market currently are based on Qualcomm's Wi-Fi 5 (802.11ac) platforms.

Wi-Fi 6 (802.11ax) has had a relatively slow start in the market, with the absence of client devices holding back widespread acceptance of the new routers from various vendors. Even though many products were announced at CES 2018, they started rolling out in retail only towards the end of last year. Netgear's flagship Wi-Fi 6 routers (RAX80 and RAX120) were launched in November 2018, and the Broadcom-based RAX80 is already available for purchase. The Qualcomm-based RAX120 will be available in retail shortly.

Given these two parallel developments, it comes as no surprise that Netgear will incorporate a Wi-Fi 6 (802.11ax) platform into the next-generation Orbi. The product will continue to use Netgear's patented Fastlane3 technology (with a dedicated 4x4 802.11ax backhaul, in addition to 5 GHz and 2.4 GHz channels for use by clients). The Wi-Fi 6 backhaul enables true gigabit wireless links between the Orbi nodes. Netgear also announced that the Orbi products will continue to use a Qualcomm platform (in fact, the early specifications seem to indicate that the RAX120 platform is being used with the addition of another 802.11ax radio).

Pricing for the Orbi kits with Wi-Fi 6 was not announced, as the products are slated to become available only in H2 2019. The announcement is particularly interesting because vendors such as TP-Link are moving to Broadcom-based 802.11ax platforms for their whole-home Wi-Fi / mesh networking products.

Gallery: Netgear Orbi Whole-Home Wi-Fi System to Adopt Qualcomm's Wi-Fi 6 802.11ax Platform

More...

Thread Information

Users Browsing this Thread

There are currently 44 users browsing this thread. (0 members and 44 guests)

Quote

Quote

Bookmarks